Trying Something New!

Haven’t blog for a while since I was quite busy with customers as well as the transition to my new role… Now I am finally settle down and I should start writing again!

After another 2 years in Milwaukee, I am passing the largest Siebel replacement project to my colleagues/friends to complete. The project has been very successful! We’ve rollout the CRM system to more than 13,000 users and there are approximately 4,000 more users to go! My friends will be there for few more months to wrap it up.

For this one, I’ve enjoyed working with many great people there, got a chance to build some good relationships and I’ve learnt a lot from the people and the project. I would like to point out that I have never seen a huge company that can adapt change so fast! Just for giggling, we changed project approach 3 times to make the project more efficient so we can provide values to the end users quicker. The team just went with the change and took everything positively. Also we had fun throughout the entire process!

For every project of mine, I like to provide some fun facts:

- Car Miles: >30,000 miles (need another new car now)

- Hotel Nights: > 350 nights

- Pound of Sushi: > 100 pound consumed (3 good sushi places in Milwaukee, 1 sushi place has all you can eat)

- Pound of Cheese: > 10 pound

- Summerfest (Largest Music Festival): 2

- Cold Winters: 2

- Coldest temperature in Milwaukee: -20F

- …

Last month I took a new role at Microsoft as a Solution Architect/Program Manager, working very closely with the CRM R&D team to support our largest global enterprise and strategic customers/partners on their Dynamics solutions, focus on CRM online! For this new role, I’ll have the opportunities to help many other customers, especially customers in China since I speak the language and always wanted to help.

Since I don’t have to travel as much as before, I’ll have more time to spend with my family! However I’ll be losing all my airline and hotel status (totally fine with it). :=)

CRM Lookup error with Google Chrome 38–Fixed

I haven’t get a chance to blog for a while and I need to pick it backup… Some of you may seen a lookup error with Chrome when Google released version 38.0.2125.101, as indicated on this blog article. Everyone is expecting Microsoft to solve this Google issue. ![]() But I think Google realized that many other applications got impacted and they fixed their own issue, as indicated on this bug in their bug system. So to resolve the CRM lookup error, you just need to update your Chrome browser version 38.0.2125.111. As always, please test other functionalities before doing a browser upgrade.

But I think Google realized that many other applications got impacted and they fixed their own issue, as indicated on this bug in their bug system. So to resolve the CRM lookup error, you just need to update your Chrome browser version 38.0.2125.111. As always, please test other functionalities before doing a browser upgrade.

I hope this helps and have a great CRM day!

CRM 2013 Reporting and SQL 2012 AlwaysOn

Just want to resurface a blog by a colleague from UK on CRM Reporting and SQL 2012 AlwaysOn since I am implementing it with my customer. Adding it to my blog just in case I need to do it again in the future.

CRM 2013 Maintenance Jobs

The Maintenance jobs for CRM 2013 is similar to the CRM 2011 jobs if they are not the same. I had to dig into the details of each of the jobs for my project. These are my findings and I hope it will be useful for everyone. Please remember to reschedule these maintenance jobs after the installation of CRM is completed.

|

Maintenance Job Name |

Purpose |

Default Frequency/ Recommendation |

|

Deletion Service |

The deletion service maintenance operation now cleans up subscription tracking records for deleted metadata objects as they expire.

|

By default, the job executes every 24 hours, reschedule the job to run at non-peak hours. E.g. 11:00PM at night |

|

Indexing Management |

Validates that system-managed indexes exist for all entities and recreates any missing indexes. This is more for CRM Online and only impacts us during configuration of the solution. It automatically creates index for each column used in search for Quick Find if the index is not already created in the CRM database |

By default, the job executes every 24 hours, reschedule the job to run at non-peak hours. E.g. 11:00PM at night |

|

Reindex All |

Reorganizes/rebuilds fragmented indexes depending on the amount of fragmentation. It execute the p_ReindexAll stored procedure, it selects all indexes in the database with greater than 30% fragmentation and runs ReIndex on each while in online mode. Run while online mode means indexes are not taken offline while reindex is happening they still are active and are functioning and can be used. Large enterprise CRM implementation with big data volume should disable this CRM reindex job if a custom job is created for recreating the indexes and with run update stats. |

By default, the job executes every 24 hours, disable the job by setting the Job Next Run value to the future. E.g. 12/31/2999 |

|

Cleanup Inactive Workflow Assemblies |

Seeks custom workflow assemblies that are no longer referenced in workflow rules or in-process jobs. Those unreferenced assemblies are then deleted. Consider the scenario where you register version 2.0 of a custom workflow assembly. You may update your rules to reference the new version, but some in-progress jobs may still be referencing version 1.0. Once those jobs have completed, this maintenance job will clean up the version 1.0 assembly that is no longer referenced by rules/jobs. It executes the p_CleanupInactiveWorkflowAssemblies stored procedure. |

By default, the job executes every 24 hours, reschedule the job to run at non-peak hours. E.g. 11:00PM at night |

|

Create Audit Partition |

Alters the partitioning scheme for the auditbase table (SQL Enterprise only). |

By default, the job executes every month, reschedule the job to run at non-peak hours. E.g. 11:00PM at night |

|

Check for MUI Updates |

Detects upgrades to language (MUI) packs and schedules additional async operations to perform individual language provisioning. |

By default, the job executes every 24 hours, if you don’t have language pack installed, you can disable the job by setting the Job Next Run value to the future. E.g. 12/31/2999 |

|

Refresh Entity Row Count |

Refreshes the Record Count snapshot statistics leveraged enhanced query plans. This job is important because it define how Quick Find works, it uses the counts to come up with the correct query plan. |

By default, the job executes every 24 hours, reschedule the job to run at non-peak hours. E.g. 11:00PM at night |

|

Refresh Sharing Count |

Refreshes the POA read snapshot statistics leveraged in enhanced query plans. This job is important because it define how Quick Find works, it uses the counts to come up with the correct query plan. |

By default, the job executes every 24 hours, reschedule the job to run at non-peak hours. E.g. 11:00PM at night |

The Deletion Service executes the following SQL queries.

|

exec sp_executesql N’select SubscriptionId, SystemUserId from Subscription (nolock) where datediff(dd, LastSyncStartedOn, getutcdate()) >= @expireSubscriptionInDays’,N’@expireSubscriptionInDays int’,@expireSubscriptionInDays=90 |

|

exec sp_executesql N’delete from PrincipalObjectAccess where (AccessRightsMask = 0 or AccessRightsMask is null) and (InheritedAccessRightsMask = 0 or InheritedAccessRightsMask is null) and VersionNumber <= @versionNumberExpired’,N’@versionNumberExpired bigint’,@versionNumberExpired=404676 |

|

exec sp_executesql N’delete from SubscriptionTrackingDeletedObject where TimeStamp <= convert(timestamp, @versionNumberExpired) SELECT @@ROWCOUNT’,N’@versionNumberExpired bigint’,@versionNumberExpired=404676 |

|

exec sp_executesql N’ declare @now DateTime = getutcdate(); DELETE FROM [MetadataSyncTrackingDeletedObject] WHERE datediff(dd, CreatedOn, @now) >= @expireSubscriptionInDays; declare @rowCount int; SELECT @rowCount = @@ROWCOUNT; declare @columnExists bit = 0; IF (EXISTS (SELECT * FROM sys.columns WHERE object_id = OBJECT_ID(N”OrganizationBase”) AND name = N”MetadataSyncLastTimeOfNeverExpiredDeletedObjects”)) BEGIN set @columnExists = 1; END SELECT @rowCount as [RowCount], @now as [Now], @columnExists as [ColumnExists]; ‘,N’@expireSubscriptionInDays int’,@expireSubscriptionInDays=90 |

|

exec sp_executesql N’ UPDATE OrganizationBase SET MetadataSyncLastTimeOfNeverExpiredDeletedObjects = null WHERE MetadataSyncLastTimeOfNeverExpiredDeletedObjects is not null AND MetadataSyncLastTimeOfNeverExpiredDeletedObjects <= DATEADD(dd, -@expireSubscriptionInDays, @now) ‘,N’@now datetime,@expireSubscriptionInDays int’,@now=’2014-04-02 16:13:42.993′,@expireSubscriptionInDays=90 |

|

exec sp_executesql N’ if exists (select * from WorkflowWaitSubscriptionBase (nolock) where IsModified = 1) begin update AsyncOperationBase set StateCode = @readyState, StatusCode = @waitingStatus, ModifiedOn = @modifiedOn, ModifiedBy = CreatedBy where StateCode = @suspendedState and RetryCount < @maxRetries and AsyncOperationId in (select AsyncOperationId from WorkflowWaitSubscriptionBase where IsModified = 1) end’,N’@readyState int,@suspendedState int,@waitingStatus int,@maxRetries int,@modifiedOn datetime’,@readyState=0,@suspendedState=1,@waitingStatus=0,@maxRetries=10,@modifiedOn=’2014-04-02 16:13:43′ |

Reindex All executes the p_ReindexAll stored procedure below.

|

/***********************************************************************************************/ /* RETURN CODES: */ /* 0 – Success */ /* 1 – Partial success – some indexes could not be rebuilt */ /* 5 – Invalid input parameter(s) */ /***********************************************************************************************/ /* Returns a) Always first recordset – one row with 4 integer columns: ResultCode – see RETURN CODES TotalIndexesToRebuild – total count of indexes detected to be rebuild RebuiltWithOnlineON – count of indexes rebuilt with option ONLINE = ON RebuiltWithOnlineOFF – count of indexes rebuilt with option ONLINE = OFF (can’t be rebuilt with ONLINE = ON) b) Always second recordset – see @errors table c) Only when @Verbose=1, then the second recordset with detailed info about all indexes */ BEGIN SET NOCOUNT ON DECLARE @TotalIndexesToRebuild int = 0, @RebuiltWithOnlineON int = 0, @RebuiltWithOnlineOFF int = 0 –Get start time for max run time tracking DECLARE @MaxTime datetime SELECT @MaxTime = dateadd(ss,ISNULL(@MaxRunTime,0), GetUTCDate()) –Account for nulls in parameters, set to default values SET @FragRebuildPct = ISNULL(@FragRebuildPct, 30) SET @AllIndexTypes = ISNULL(@AllIndexTypes, 0) SET @Verbose = ISNULL(@Verbose, 0) SET @MinPages = ISNULL(@MinPages, 25) SET @Online = ISNULL(@Online, 1) –Validate parameters IF ((@MaxRunTime <= 0) OR (@AllIndexTypes not in (0,1)) OR (@Verbose not in (0,1)) OR (@Online not in (0,1)) OR (@MinPages < 1) OR (@FragRebuildPct > 100) OR (@FragRebuildPct < 0)) BEGIN PRINT ‘Invalid Parameter value. Valid values are:’ PRINT ‘MaxRunTime > 0,’ PRINT ‘MinPages > 0’ PRINT ‘FragRebuildPct in {NULL,0..100}’ PRINT ‘AllIndexTypes in {0,1}’ PRINT ‘Verbose in {0,1}’ PRINT ‘Online in {0,1}’ SELECT 5 as ResultCode, @TotalIndexesToRebuild as TotalIndexesToRebuild, @RebuiltWithOnlineON as RebuiltWithOnlineON, @RebuiltWithOnlineOFF as RebuiltWithOnlineOFF RETURN 5 END DECLARE @indexes table ( SchemaName sysname, TableName sysname, IndexName sysname, OldFrag int, NewFrag int null, processed bit ) DECLARE @errors table ( Number int, Severity int, State int, –Message nvarchar(4000), — can be found by select * from sys.messages m where message_id = Number and m.language_id = 1033 OnlineOn bit, Statement NVarchar(2048) ) INSERT INTO @indexes SELECT schema_name(o.schema_id), object_name(s.object_id), i.name, s.avg_fragmentation_in_percent, null, 0 FROM sys.dm_db_index_physical_stats (db_id(),NULL,NULL,NULL,NULL) s JOIN sys.objects o on (s.object_id = o.object_id) JOIN sys.indexes i on (s.object_id = i.object_id and s.index_id = i.index_id) WHERE s.avg_fragmentation_in_percent > @FragRebuildPct — defrag only if more than x% fragmented and i.type in (1, @AllIndexTypes + 1) — (1,2) — cannot defrag non-indexes(0-heap, 1- clustered, 2-nonclustered, 3-xml) and s.page_count >= @MinPages — select only if the index spans multiple pages ORDER BY s.avg_fragmentation_in_percent desc select @TotalIndexesToRebuild = @@rowcount DECLARE @SchemaName sysname, @TableName sysname, @IndexName sysname, @sqlTemplate NVarchar(2048), @sql NVarchar(2048) DECLARE @retry bit ,@onlineON bit DECLARE IndexCursor CURSOR LOCAL FOR SELECT SchemaName, TableName, IndexName from @indexes order by OldFrag desc OPEN IndexCursor FETCH NEXT FROM IndexCursor INTO @SchemaName, @TableName, @IndexName WHILE ((@@FETCH_STATUS = 0) AND ((GetUTCDate() < @MaxTime) OR (@MaxRunTime IS NULL)) ) BEGIN select @sqlTemplate = ‘ALTER INDEX [‘+ @IndexName +’] ‘+ ‘ON [‘+@SchemaName+’].[‘+@TableName+’] REBUILD WITH ‘+ ‘( PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON, SORT_IN_TEMPDB = OFF, ONLINE = ‘ IF (@Online=1) select @sql = @sqlTemplate + ‘ON )’ ELSE select @sql = @sqlTemplate + ‘OFF )’ select @retry = 1, @onlineON = @Online while (@retry = 1) BEGIN BEGIN TRY IF (@Verbose=1) PRINT @sql EXEC (@sql) select @retry = 0 IF (@onlineON=1) SELECT @RebuiltWithOnlineON = @RebuiltWithOnlineON +1 ELSE SELECT @RebuiltWithOnlineOFF = @RebuiltWithOnlineOFF +1 END TRY BEGIN CATCH insert into @errors select ERROR_NUMBER(), ERROR_SEVERITY(), ERROR_STATE(), @onlineON, @sql IF (@onlineON=1 and ERROR_NUMBER() = 2725) BEGIN — Handle the possible exception below: rebuild index offline. Only SQL2012 has THROW –ErrorNumber ErrorMessage –2725 An online operation cannot be performed for index ‘?’ because the index contains column ‘?’ of data type text, ntext, image, varchar(max), nvarchar(max), varbinary(max), xml, or large CLR type. For a non-clustered index, the column could be an include column of the index. For a clustered index, the column could be any column of the table. If DROP_EXISTING is used, the column could be part of a new or old index. The operation must be performed offline. select @sql = @sqlTemplate + ‘OFF )’ select @onlineON = 0 END ELSE select @retry = 0 END CATCH END UPDATE @indexes SET processed=1 WHERE SchemaName=@SchemaName and TableName=@TableName and IndexName=@IndexName FETCH NEXT FROM IndexCursor INTO @SchemaName, @TableName, @IndexName END CLOSE IndexCursor DEALLOCATE IndexCursor IF (@Verbose=1) BEGIN UPDATE @indexes SET NewFrag = avg_fragmentation_in_percent FROM sys.dm_db_index_physical_stats (db_id(),NULL,NULL,NULL,NULL) s JOIN sys.objects o on (s.object_id = o.object_id) JOIN sys.indexes i on (s.object_id = i.object_id and s.index_id = i.index_id) WHERE SchemaName=schema_name(o.schema_id) and TableName = object_name(s.object_id) and IndexName = i.name END DECLARE @ResultCode int IF Exists(select * from @indexes where processed = 0) BEGIN PRINT ‘Did not process all indexes due to @MaxRunTime constraint’ SELECT @ResultCode = 1 END ELSE BEGIN SELECT @ResultCode = 0 END — Return results SELECT @ResultCode as ResultCode, @TotalIndexesToRebuild as TotalIndexesToRebuild, @RebuiltWithOnlineON as RebuiltWithOnlineON, @RebuiltWithOnlineOFF as RebuiltWithOnlineOFF SELECT * from @errors IF (@Verbose=1) SELECT * FROM @indexes order by OldFrag desc RETURN @ResultCode |

Clean Up Inactive Workflow Assemblies executes the p_CleanupInactiveWorkflowAssemblies stored procedures below:

|

DECLARE @ActivationsToDelete TABLE ( WorkflowId UNIQUEIDENTIFIER ) INSERT @ActivationsToDelete SELECT WorkflowId FROM WorkflowBase WHERE Type = 2 — it’s a workflow activation AND StateCode = 0 — not active AND WorkflowId NOT IN (SELECT OwningExtensionId FROM AsyncOperationBase WHERE OperationType = 10 AND OwningExtensionId IS NOT NULL) DELETE FROM WorkflowDependencyBase WHERE WorkflowId IN (SELECT WorkflowId from @ActivationsToDelete) DELETE FROM DependencyNodeBase WHERE ComponentType = 29 AND ObjectId IN (SELECT WorkflowId from @ActivationsToDelete) DELETE FROM WorkflowBase WHERE WorkflowId IN (SELECT WorkflowId from @ActivationsToDelete) |

Dynamics CRM Outlook Client and Office

Customer ask me a question today on the CRM Outlook client. The question is if my Windows is 64 bit, my Office is 32 bit, what version of the CRM Outlook Client should I install?

The answer is the Outlook Client should align with Office. Therefore if Office is 32 bit, install the 32 bit client. If Office is 64 bit, install the 64 bit client.

Learned something new on IIS Compression

Dynamics CRM is a web app, therefore it leverages IIS. Usually IIS compression is enabled by default since we would like to reduce bandwidth usage and also reduce the page load times. The .aspx files sent by the server to the browser is highly compressed by algorithms such as gzip. IIS 7+ offers the ability to cache not only compressed static files, it also compressed dynamic files. Modern browsers have the ability to decompress the incoming files.

IIS Compression uses the CPU to compress the files, I’ve learned that compression is automatically switch OFF when CPU usage is above 90% for Dynamic file and 100% for Static files. It will automatically switch back ON when the CPU drops back to 50% for both Dynamic and Static files. You can modify these limits in the applicationHost.config file which is normally in the C:\Windows\System32\inetsrv\config folder.

I want to bring this to everyone’s attention because usually during CRM performance testing, we often push the server CPUs to their limits. Please look at your CPU usage on your front end servers to make sure that they don’t hit the IIS compression limits and you will have a more accurate performance test.

Manually Apply CRM Update Rollup to Single Tenant

In an enterprise CRM project, you might run into a large number of tenants in an environment since there are multiple teams working on the project. Not all of the teams want to have the patch apply to their tenant due to many reasons… My customer asked me if there’s a way for them to apply the CRM Update Rollup to a single tenant instead of all tenants in an environment. I am thinking there’s got to be a way since CRM Online applies the patches at the tenant level, so I reach out to my colleagues in PG and Premier and sure there’s a way to do it. ![]()

By default, when you install a CRM Update Rollup, it will automatically applies the update to all of the tenants (include database updates). If you want to disable the automatic database update, please execute the following PowerShell script.

add-pssnapin Microsoft.Crm.Powershell

$itemSetting = new-object 'System.Collections.Generic.KeyValuePair[String,Object]'("AutomaticallyInstallDatabaseUpdates",0)

$configEntity = New-Object "Microsoft.Xrm.Sdk.Deployment.ConfigurationEntity"

$configEntity.LogicalName="Deployment"

$configEntity.Attributes = New-Object "Microsoft.Xrm.Sdk.Deployment.AttributeCollection"

$configEntity.Attributes.Add($itemSetting)

Set-CrmAdvancedSetting -Entity $configEntity

After the script is executed, you may go to Deployment Manager and apply the patch to the DB at the tenant level. Just want to clarify that you are still installing the update rollup bits to the servers, and the above script will disable the updates to the CRM databases. All tenants will get the new UR bits, there are no ways around it, but the bits are backward compatible so they will work with older database schema.

I hope this will help you in your CRM project!

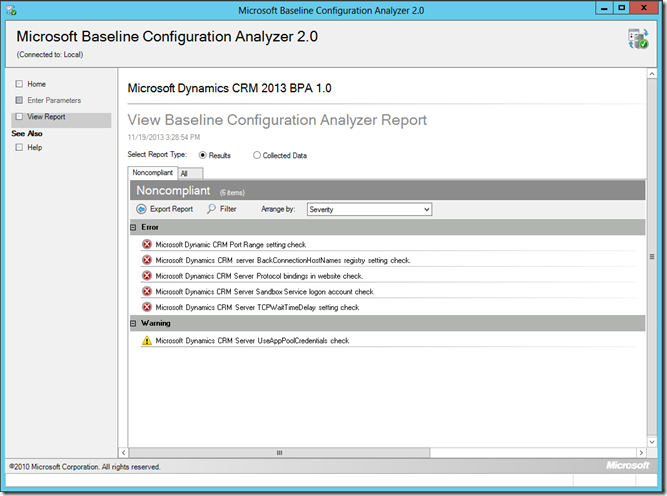

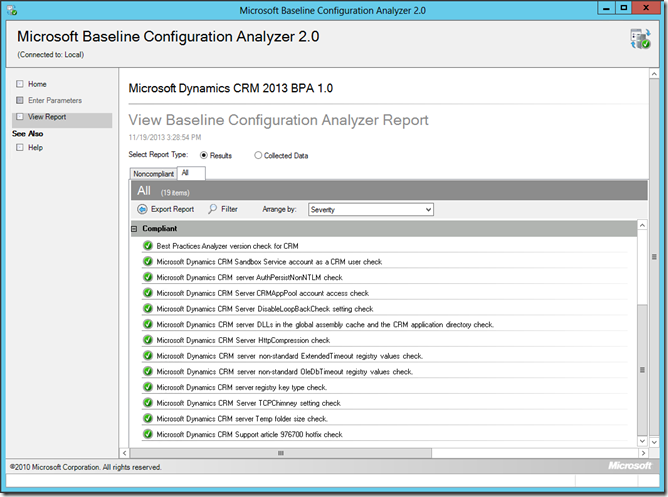

Microsoft Dynamics CRM 2013 Best Practice Analyzer

Microsoft releases Microsoft Dynamics CRM 2013 Best Practices Analyzer today. This is a diagnostic tool that gathers information about Microsoft Dynamics CRM 2013 server roles and offers recommendations.

The Microsoft Dynamics CRM 2013 Best Practices Analyzer is a diagnostic tool that performs the following functions:

- Gathers information about the CRM 2013 server roles that are installed on that server.

- Determines if the configurations are set according to the recommended best practices.

- Reports on all configurations, indicating settings that differ from recommendations.

- Indicates potential problems in the CRM 2013 features installed.

- Recommends solutions to potential problems.

You may download the CRM 2013 Best Practice Analyzer from the Microsoft download center.

This diagnostic tool requires Microsoft Baseline Configuration Analyzer 2.0. Microsoft Baseline Configuration Analyzer 2.0 (MBCA 2.0) can help you maintain optimal system configuration by analyzing configurations of your computers against a predefined set of best practices, and reporting results of the analyses. You may download the Baseline Configure Analyzer 2.0 from the Microsoft download center as well.

The tool is pretty easy to install and use. I installed ran the tool in less than 5 minutes on my Azure lab. Sample output below.

How to change organization URL in CRM 2013 MoCA client?

At this moment, The only way to change the org URL is to uninstall and reinstall the MoCA app. Happy to be corrected. ![]()

Determine the # of Outlook Client Users

I got another interesting question from my customer today. How many users configured the CRM Outlook client? Thanks to my PFE friend, KG, he provided us the SQL query to get that information.

To get the # of subscription clients in CRM, you may execute the query against the [ORGNAME]_MSCRM database below.

select distinct MachineName from SubscriptionClients